Applying cyber threat intelligence analysis to disinformation

The detection and disruption of disinformation on social media is an emerging field in which investigators are slowly building, mapping and applying methodologies to transform what has been perceived as an investigative art into more of a defined practice. Many of these methodologies are borrowed from existing fields, such as media & communications theory, marketing, psychology and linguistics.

The field of cyber threat intelligence (CTI) has the potential to bring many existing frameworks into the study of disinformation. CTI is focused on the analysis and monitoring of adversaries conducting cyber operations against a variety of organisations using intelligence methodologies. Despite CTI itself being an emerging field, it has nevertheless reached a plateau of analytical maturity from which the field of disinformation studies can benefit from due to decades of analysis being conducted on adversaries and their techniques, tactics and procedures (TTPs). This emerging field has been dubbed a variety of names, one of them being “misinfosec” in an echo to the information security field to which CTI belongs.

Researchers attempting to outline a standardized methodology for the analysis of disinformation have already begun borrowing CTI frameworks. One such widely adopted CTI framework is MITRE’s ATT&CK framework, which outlines standardized adversary TTPs. This allows analysts develop a common language to describe the capabilities of threat actors when compromising victims. The CogSec Collaborative has adapted the ATT&CK framework to disinformation using the Adversarial Misinformation and Influence Tactics and Techniques (AM!TT). Using this framework to study disinformation operations will allow analysts to use a standardised analytical language, allowing for greater collaboration and intelligence sharing between organisations.

Furthermore, both ATT&CK and AM!TT can be shared between organisations using machine-readable information such as Standardized Threat Intelligence eXpression (STIX). STIX is already widely used in the CTI sector and allows for the rapid and consistent exchange of observables and information amongst stakeholders.

While such efforts have made significant strides in adapting CTI models to disinformation studies, one core CTI framework has yet to be adapted to disinformation: the Diamond Model of Intrusion. This article will attempt to provide insight into what this framework achieves and how it is used by analysts to produce threat intelligence. Using this model, this article will also propose an adapted Diamond Model for disinformation and how it can be used to study, detect and disrupt information operations.

The Diamond Model of Intrusion

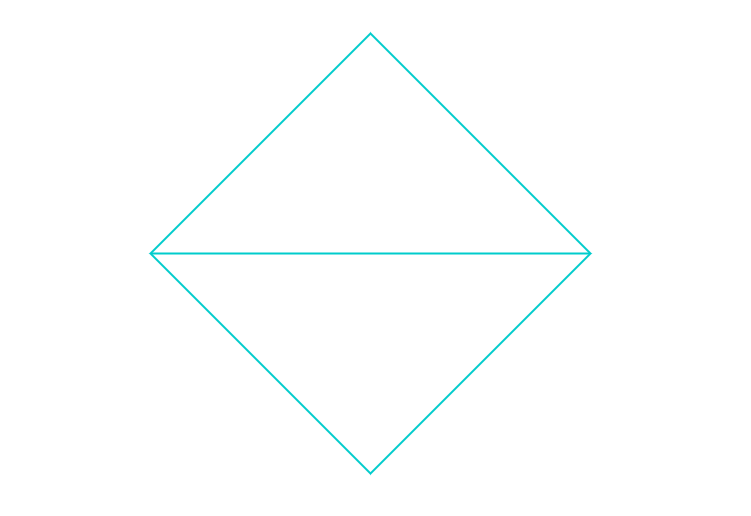

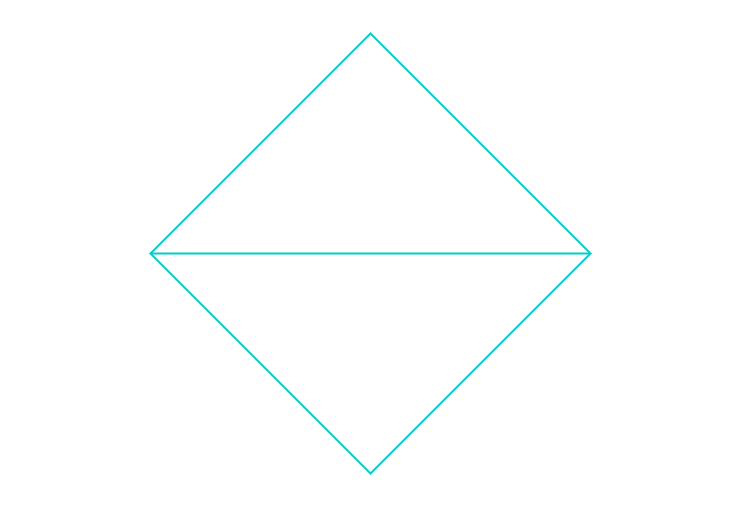

The Diamond Model of Intrusion is an analytical framework often used in threat intelligence analysis when investigating cyber operations targeting organisations. The framework was originally outlined in a paper published in 2013 by Sergio Caltagirone, Andrew Pendergast and Cristopher Betz titled “The Diamond Model of Intrusion Analysis”. The paper attempts to apply scientific methods to intrusion analysis. The model considers observable “atomic” elements of an intrusion, such as Indicators of Compromise (IOCs), and models the “underlying relationships” of these atomic elements between four main features: Adversaries, Infrastructure, Capabilities, and Victims. The model analyses intrusions by which “an adversary deploys a capability over some infrastructure against a victim.”

These four features and their relationships are hence modelled as a diamond, which analysts can use to pivot from one point of the diamond to the other based on atomic observables, allowing for the systematic and evidence-based reconstruction of cyber intrusions.

Examples of atomic observables which can be found by analysts for each feature can include the following:

Adversary: Identity, IP addresses, Network Assets (infrastructure), Resources

Infrastructure: Command & Control (C2) infrastructure, IP addresses, domain names, email accounts

Capability: Malware, Exploits, Certificates

Victim: Identity, Sector, Geography, Network assets (infrastructure, vulnerabilities)

Collecting these observables through various allows analysts to pivot from victims to adversaries via infrastructure and capabilities, hence unravelling the thread of cyber intrusions and gaining insight into the adversaries.

For example, a cyber intrusion targeting an organisation is likely to leave artefacts (such as malware samples). Forensic investigation of the victim’s networks will lead to the collection of several malware samples. Reverse engineering these samples will reveal observables pertaining to network infrastructure controlling the malware (such as IP addresses and domain names). Correlating these observables with other intrusions may reveal the specific adversary controlling this network infrastructure.

Hence, we have a way to find the potential adversary responsible for deploying these capabilities over the discovered infrastructure. While the attribution of intrusions to specific actors is not an explicit goal, the framework evidently offers a useful analytical basis from which analysts can start piecing together observables in order to analyse the intrusion.

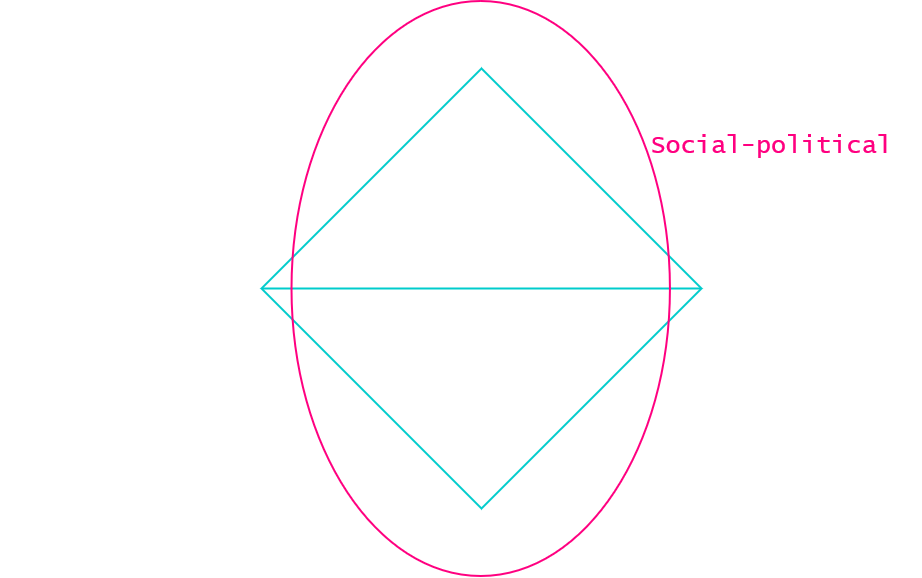

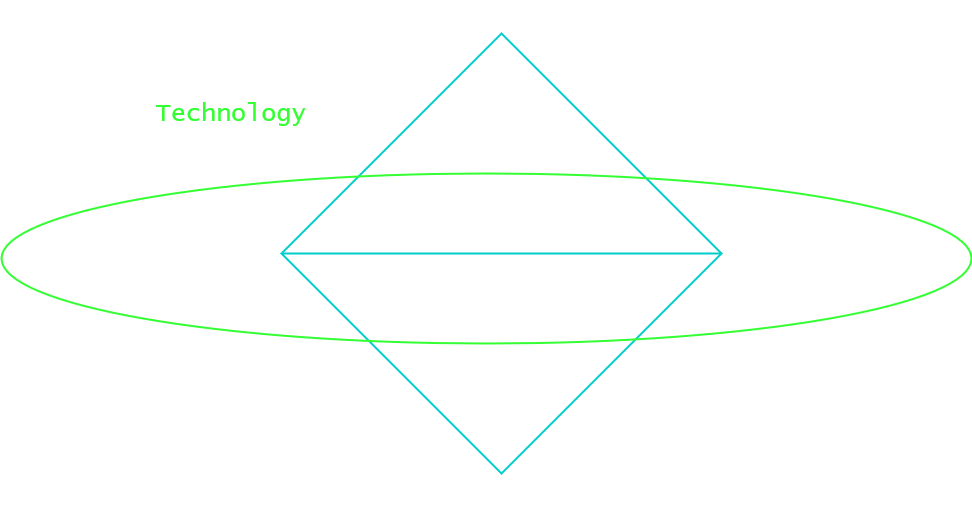

In addition to observables and features that can be used to populate the model when studying intrusions, two further axes of analysis can be considered by looking at relationships between opposite sides of the diamond: the social-political and technological axis.

The first of these, the social-political axis considers the relationship between the Adversary and Victim features and allows analysts to answer the following questions using observables. Who is the victim? What sector and geography does the victim operate in? What are the possible social-political motivations for an adversary to target this organisation? What is the wider social-political context for this intrusion? What does this indicate about the adversary?

The second of these is the technology axis, which considers the relationship between the Infrastructure and Capability features. Which techniques, tactics and procedures (TTPs) have been employed for this intrusion? What is the level of sophistication and resources employed? How have these resources been employed?

Finally, the original paper outlines a list of “meta-features” that permeate this model without meriting explicit features. These meta-features can be nevertheless be used by analysts to pivot between features, although they provide a lesser threshold of evidence than observables. These include:

- Time: When did different events occur? What does the operational tempo of the intrusion reveal about the adversary?

- Phases: Which specific phases did the intrusion follow? Are there intermediary stages within the Infrastructure or Capability features that reveal a specific behavior?

- Result: What was the result of the intrusion? Did the adversary achieve their objectives? What was the impact on the victim?

- Resources: Which software, information, funds and facilities did the adversary leverage? How did they obtain these resources?

The Diamond model of Disinformation

How can we apply this analytical model to the analysis of disinformation operations? This question can only be answered by first acknowledging some key differences between studying cyber “intrusions” and disinformation operations. Whilst cyber intrusions primarily operate by targeting victim infrastructure using defined capabilities which seek to exploit known vulnerabilities or weaknesses in such infrastructure, disinformation operations operate in a much broader informational context.

Adversaries conducting disinformation operations look to exploit cultural, social, and political vulnerabilities rather than software vulnerabilities. While software vulnerabilities usually impact specific technologies and have specific effects such as remote code execution (RCE), societal vulnerabilities are porous, dynamic, and exploiting them can produce a variety of effects depending on context. The scope of “Capabilities” must be expanded in order to encompass the variety of narratives and themes that can be pushed by vectors of disinformation in order to exploit these societal vulnerabilities.

Adversaries conducting disinformation operations also do so using a variety of mediums, including social media platforms, media outlets, and individuals (influencers, journalists). The scope of the “Infrastructure” feature of the Diamond Model of Intrusion must be significantly widened to englobe a variety of means used for disinformation beyond servers, IP addresses and domain names.

Given these key differences, we can begin to shape an adapted model for disinformation. In his 2020 book Active Measures, Thomas Rid evokes a possible model following a consistent review of historical disinformation operations:

“Disinformation operations rely upon tactics that exploit technology, political divisions, and tensions between allies. Political fissures and friction are a function of the target. The design of the divisive material and the craftsmanship of disinformation are a function of the attacker. The technological substrate and the available media platforms are a function of the operational environment. The higher the quality of all three, the more active measures will be- or, put another way, the lesser the political divisions within the target organization, and the more primitive the telecommunications environment, the more value the attacker will have to add at all stages of an operation in order to make and sustain an active measure”.

Rid mentions two key features here: firstly, what he calls the “divisive material and craftsmanship of disinformation” evokes the idea of “messages” being the main capability deployed by adversaries, which he also refers to as “payloads” in Active Measures. Second, Rid mentions that “the technological substrate and the available media platforms are a function of the operational environment”. While this phrase echoes very strongly towards the Technology axis of the Diamond Model of intrusion, the mentioning of “media platforms” as a vector for disinformation “payloads” or “messages” provides a useful basis for our adapted model.

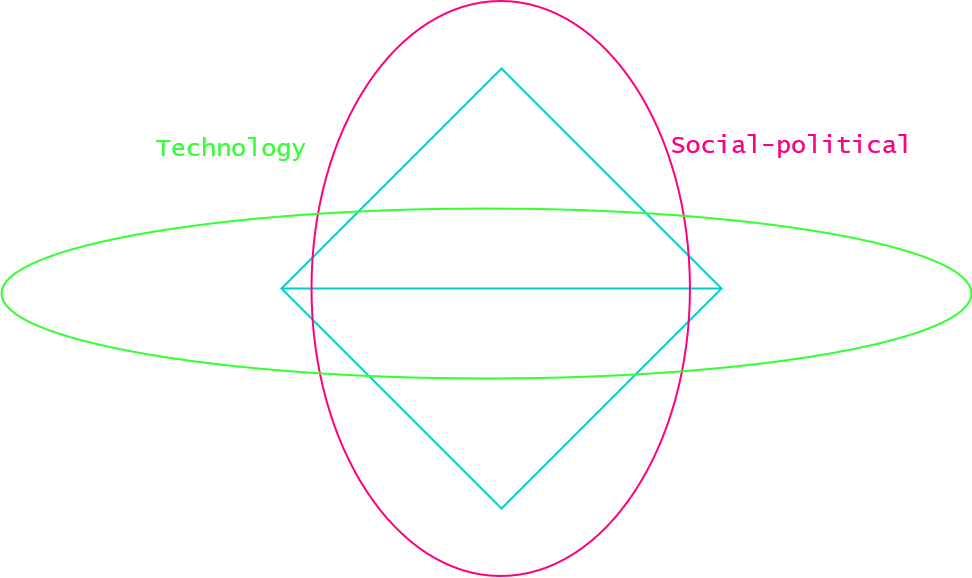

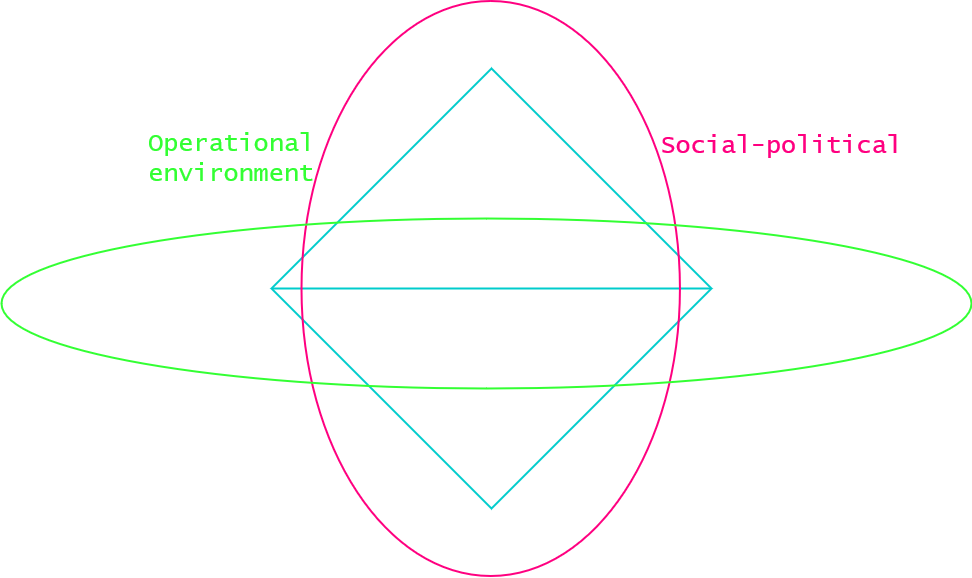

A first proposition is to adapt the features of “Infrastructure” and “Capabilities” to “Mediums” and “Messages” while preserving the “Adversary” and “Victim” features.

We can therefore outline the following observables and their characteristics for each of these features:

Adversaries: Characteristics of adversaries can include their own socio-political identity, intent (political will and strategic goals) and range of capabilities and resources (availability or control of mediums and production of messages). In addition to available resources, the range of capabilities may include the capability to obtain sensitive or compromising information via covert means (HUMINT/SIGINT) as observed in hack and leak operations as well as the capability to effectively craft forgeries.

Mediums: Mediums can include mediums of communication (social media platforms, broadcast networks) as well as specific agents of influence (journalists, public figures, influencers). Mediums can be under varying degrees of explicit or control by adversaries. Whilst state-owned media outlets such as RT and Sputnik are explicit mouthpieces for the Russian government, the use of anonymous accounts to promote messages on social media platforms by outfits like the Internet Research Agency (IRA) are harder to attribute directly. Observables include both social and traditional media platforms, accounts, artefacts (text posts, images, videos), algorithmic features (hashtags) and related metadata.

Messages: This can include false information (also referred to as a “fake news”), leaked information (as obtained by “hack and leak” operations) as well as true information (perhaps the most potent form of messaging). Messages often reveal the strategic intent behind the campaign, as they look to attack or defend specific narratives in which adversaries have an interest in controlling. Social media posts attacking the efficacy of Pfizer COVID-19 vaccines can reveal a desire to erode public trust in vaccines produced by Western pharmaceutical companies, as opposed to other vaccines such as Sputnik. Observables include the specific themes, narratives and symbols distributed by the vector of mediums that try to exploit specific societal vulnerabilities or frictions.

Victims : Characteristics of victims can include their specific socio-political identity, cultural and regional identity, domestic political fissures and friction as well as their societal resilience to disinformation. These characteristics have a direct influence on the ability of both mediums (the domestic media landscape varies widely amongst countries) and messages (political and social frictions also vary widely) to be leveraged effectively to disinform the victim.

We can also adapt the “technology” axis to what Rid calls the “operational environment” in which disinformation operates. This feature allows us to consider a much broader scope of mediums and messages beyond internet-facing infrastructure and host-based capabilities to reflect the wider informational context in which disinformation operations are conducted. This operational environment hence includes media platforms and outlets, accounts, messaging, etc.

Conclusion

The Diamond Model of Disinformation is a proposed framework by which analysts can frame their analysis, allowing them to pivot from observables to different “features”, unravel the chronology and process of an intrusion, and gain insight into the adversary and their capabilities.

As with many theories that are outlined in simple blog posts, the utility of this model can only be proven by operationalizing it using examples. This will be the object of a follow-up piece, which will cover the 2017 #MacronLeaks hack-and-leak operation. It will attempt to apply both the Diamond Model of Intrusion to the cyber intrusion into En Marche and the Diamond Model of Disinformation to the subsequent disinformation campaign.

Leave a comment